Fighting Spam

Fighting email spam in modern times most of the times looks like this:

![1ab83c40625d102da1b3001438c0f03b.gif 1ab83c40625d102da1b3001438c0f03b.gif]()

Rspamd

Rspamd is a rapid spam filtering system. Written in C with Lua script engine extension seems to be really fast and a really good solution for SOHO environments.

In this blog post, I'’ll try to present you a quickstart guide on working with rspamd on a CentOS 6.9 machine running postfix.

DISCLAIMER: This blog post is from a very technical point of view!

Installation

We are going to install rspamd via know rpm repositories:

Epel Repository

We need to install epel repository first:

# yum -y install http://fedora-mirror01.rbc.ru/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm

Rspamd Repository

Now it is time to setup the rspamd repository:

# curl https://rspamd.com/rpm-stable/centos-6/rspamd.repo -o /etc/yum.repos.d/rspamd.repo

Install the gpg key

# rpm --import http://rspamd.com/rpm-stable/gpg.key

and verify the repository with # yum repolist

repo id repo name

base CentOS-6 - Base

epel Extra Packages for Enterprise Linux 6 - x86_64

extras CentOS-6 - Extras

rspamd Rspamd stable repository

updates CentOS-6 - Updates

Rpm

Now it is time to install rspamd to our linux box:

# yum -y install rspamd

# yum info rspamd

Name : rspamd

Arch : x86_64

Version : 1.6.3

Release : 1

Size : 8.7 M

Repo : installed

From repo : rspamd

Summary : Rapid spam filtering system

URL : https://rspamd.com

License : BSD2c

Description : Rspamd is a rapid, modular and lightweight spam filter. It is designed to work

: with big amount of mail and can be easily extended with own filters written in

: lua.

Init File

We need to correct rspamd init file so that rspamd can find the correct configuration file:

# vim /etc/init.d/rspamd

# ebal, Wed, 06 Sep 2017 00:31:37 +0300

## RSPAMD_CONF_FILE="/etc/rspamd/rspamd.sysvinit.conf"

RSPAMD_CONF_FILE="/etc/rspamd/rspamd.conf"

Start Rspamd

We are now ready to start for the first time rspamd daemon:

# /etc/init.d/rspamd restart

syntax OK

Stopping rspamd: [FAILED]

Starting rspamd: [ OK ]

verify that is running:

# ps -e fuwww | egrep -i rsp[a]md

root 1337 0.0 0.7 205564 7164 ? Ss 20:19 0:00 rspamd: main process

_rspamd 1339 0.0 0.7 206004 8068 ? S 20:19 0:00 _ rspamd: rspamd_proxy process

_rspamd 1340 0.2 1.2 209392 12584 ? S 20:19 0:00 _ rspamd: controller process

_rspamd 1341 0.0 1.0 208436 11076 ? S 20:19 0:00 _ rspamd: normal process

perfect, now it is time to enable rspamd to run on boot:

# chkconfig rspamd on

# chkconfig --list | egrep rspamd

rspamd 0:off 1:off 2:on 3:on 4:on 5:on 6:off

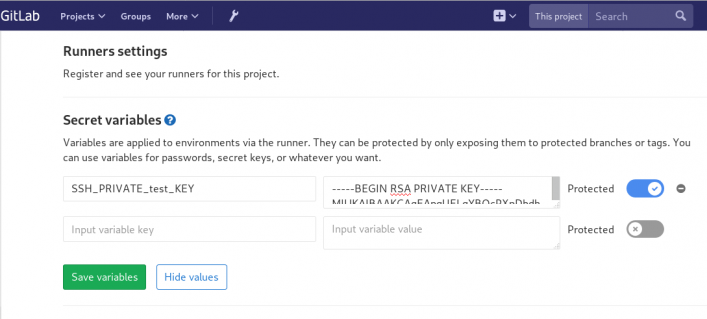

Postfix

In a nutshell, postfix will pass through (filter) an email using the milter protocol to another application before queuing it to one of postfix’s mail queues. Think milter as a bridge that connects two different applications.

![rspamd_milter_direct.png rspamd_milter_direct.png]()

Rspamd Proxy

In Rspamd 1.6 Rmilter is obsoleted but rspamd proxy worker supports milter protocol. That means we need to connect our postfix with rspamd_proxy via milter protocol.

Rspamd has a really nice documentation: https://rspamd.com/doc/index.html

On MTA integration you can find more info.

# netstat -ntlp | egrep -i rspamd

output:

tcp 0 0 0.0.0.0:11332 0.0.0.0:* LISTEN 1451/rspamd

tcp 0 0 0.0.0.0:11333 0.0.0.0:* LISTEN 1451/rspamd

tcp 0 0 127.0.0.1:11334 0.0.0.0:* LISTEN 1451/rspamd

tcp 0 0 :::11332 :::* LISTEN 1451/rspamd

tcp 0 0 :::11333 :::* LISTEN 1451/rspamd

tcp 0 0 ::1:11334 :::* LISTEN 1451/rspamd

# egrep -A1 proxy /etc/rspamd/rspamd.conf

worker "rspamd_proxy" {

bind_socket = "*:11332";

.include "$CONFDIR/worker-proxy.inc"

.include(try=true; priority=1,duplicate=merge) "$LOCAL_CONFDIR/local.d/worker-proxy.inc"

.include(try=true; priority=10) "$LOCAL_CONFDIR/override.d/worker-proxy.inc"

}

Milter

If you want to know all the possibly configuration parameter on postfix for milter setup:

# postconf | egrep -i milter

output:

milter_command_timeout = 30s

milter_connect_macros = j {daemon_name} v

milter_connect_timeout = 30s

milter_content_timeout = 300s

milter_data_macros = i

milter_default_action = tempfail

milter_end_of_data_macros = i

milter_end_of_header_macros = i

milter_helo_macros = {tls_version} {cipher} {cipher_bits} {cert_subject} {cert_issuer}

milter_macro_daemon_name = $myhostname

milter_macro_v = $mail_name $mail_version

milter_mail_macros = i {auth_type} {auth_authen} {auth_author} {mail_addr} {mail_host} {mail_mailer}

milter_protocol = 6

milter_rcpt_macros = i {rcpt_addr} {rcpt_host} {rcpt_mailer}

milter_unknown_command_macros =

non_smtpd_milters =

smtpd_milters =

We are mostly interested in the last two, but it is best to follow rspamd documentation:

# vim /etc/postfix/main.cf

Adding the below configuration lines:

# ebal, Sat, 09 Sep 2017 18:56:02 +0300

## A list of Milter (mail filter) applications for new mail that does not arrive via the Postfix smtpd(8) server.

on_smtpd_milters = inet:127.0.0.1:11332

## A list of Milter (mail filter) applications for new mail that arrives via the Postfix smtpd(8) server.

smtpd_milters = inet:127.0.0.1:11332

## Send macros to mail filter applications

milter_mail_macros = i {auth_type} {auth_authen} {auth_author} {mail_addr} {client_addr} {client_name} {mail_host} {mail_mailer}

## skip mail without checks if something goes wrong, like rspamd is down !

milter_default_action = accept

Reload postfix

# postfix reload

postfix/postfix-script: refreshing the Postfix mail system

Testing

netcat

From a client:

$ nc 192.168.122.96 25

220 centos69.localdomain ESMTP Postfix

EHLO centos69

250-centos69.localdomain

250-PIPELINING

250-SIZE 10240000

250-VRFY

250-ETRN

250-ENHANCEDSTATUSCODES

250-8BITMIME

250 DSN

MAIL FROM: <root@example.org>

250 2.1.0 Ok

RCPT TO: <root@localhost>

250 2.1.5 Ok

DATA

354 End data with <CR><LF>.<CR><LF>

test

.

250 2.0.0 Ok: queued as 4233520144

^]

Logs

Looking through logs may be a difficult task for many, even so it is a task that you have to do.

MailLog

# egrep 4233520144 /var/log/maillog

Sep 9 19:08:01 localhost postfix/smtpd[1960]: 4233520144: client=unknown[192.168.122.1]

Sep 9 19:08:05 localhost postfix/cleanup[1963]: 4233520144: message-id=<>

Sep 9 19:08:05 localhost postfix/qmgr[1932]: 4233520144: from=<root@example.org>, size=217, nrcpt=1 (queue active)

Sep 9 19:08:05 localhost postfix/local[1964]: 4233520144: to=<root@localhost.localdomain>, orig_to=<root@localhost>, relay=local, delay=12, delays=12/0.01/0/0.01, dsn=2.0.0, status=sent (delivered to mailbox)

Sep 9 19:08:05 localhost postfix/qmgr[1932]: 4233520144: removed

Everything seems fine with postfix.

Rspamd Log

# egrep -i 4233520144 /var/log/rspamd/rspamd.log

2017-09-09 19:08:05 #1455(normal) <79a04e>; task; rspamd_message_parse: loaded message; id: <undef>; queue-id: <4233520144>; size: 6; checksum: <a6a8e3835061e53ed251c57ab4f22463>

2017-09-09 19:08:05 #1455(normal) <79a04e>; task; rspamd_task_write_log: id: <undef>, qid: <4233520144>, ip: 192.168.122.1, from: <root@example.org>, (default: F (add header): [9.40/15.00] [MISSING_MID(2.50){},MISSING_FROM(2.00){},MISSING_SUBJECT(2.00){},MISSING_TO(2.00){},MISSING_DATE(1.00){},MIME_GOOD(-0.10){text/plain;},ARC_NA(0.00){},FROM_NEQ_ENVFROM(0.00){;root@example.org;},RCVD_COUNT_ZERO(0.00){0;},RCVD_TLS_ALL(0.00){}]), len: 6, time: 87.992ms real, 4.723ms virtual, dns req: 0, digest: <a6a8e3835061e53ed251c57ab4f22463>, rcpts: <root@localhost>

It works !

Training

If you have already a spam or junk folder is really easy training the Bayesian classifier with rspamc.

I use Maildir, so for my setup the initial training is something like this:

# cd /storage/vmails/balaskas.gr/evaggelos/.Spam/cur/

# find . -type f -exec rspamc learn_spam {} \;

Auto-Training

I’ve read a lot of tutorials that suggest real-time training via dovecot plugins or something similar. I personally think that approach adds complexity and for small companies or personal setup, I prefer using Cron daemon:

@daily /bin/find /storage/vmails/balaskas.gr/evaggelos/.Spam/cur/ -type f -mtime -1 -exec rspamc learn_spam {} \;

That means every day, search for new emails in my spam folder and use them to train rspamd.

Training from mbox

First of all seriously ?

Split mbox

There is a nice and simply way to split a mbox to separated files for rspamc to use them.

# awk '/^From / {i++}{print > "msg"i}' Spam

and then feed rspamc:

# ls -1 msg* | xargs rspamc --verbose learn_spam

Stats

# rspamc stat

Results for command: stat (0.068 seconds)

Messages scanned: 2

Messages with action reject: 0, 0.00%

Messages with action soft reject: 0, 0.00%

Messages with action rewrite subject: 0, 0.00%

Messages with action add header: 2, 100.00%

Messages with action greylist: 0, 0.00%

Messages with action no action: 0, 0.00%

Messages treated as spam: 2, 100.00%

Messages treated as ham: 0, 0.00%

Messages learned: 1859

Connections count: 2

Control connections count: 2157

Pools allocated: 2191

Pools freed: 2170

Bytes allocated: 542k

Memory chunks allocated: 41

Shared chunks allocated: 10

Chunks freed: 0

Oversized chunks: 736

Fuzzy hashes in storage "rspamd.com": 659509399

Fuzzy hashes stored: 659509399

Statfile: BAYES_SPAM type: sqlite3; length: 32.66M; free blocks: 0; total blocks: 430.29k; free: 0.00%; learned: 1859; users: 1; languages: 4

Statfile: BAYES_HAM type: sqlite3; length: 9.22k; free blocks: 0; total blocks: 0; free: 0.00%; learned: 0; users: 1; languages: 1

Total learns: 1859

X-Spamd-Result

To view the spam score in every email, we need to enable extended reporting headers and to do that we need to edit our configuration:

# vim /etc/rspamd/modules.d/milter_headers.conf

and just above use add :

# ebal, Wed, 06 Sep 2017 01:52:08 +0300

extended_spam_headers = true;

use = [];

then reload rspamd:

# /etc/init.d/rspamd reload

syntax OK

Reloading rspamd: [ OK ]

View Source

If your open the email in view-source then you will see something like this:

X-Rspamd-Queue-Id: D0A5728ABF

X-Rspamd-Server: centos69

X-Spamd-Result: default: False [3.40 / 15.00]

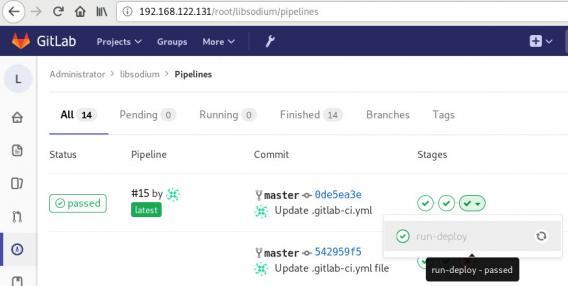

Web Server

Rspamd comes with their own web server. That is really useful if you dont have a web server in your mail server, but it is not recommended.

By-default, rspamd web server is only listening to local connections. We can see that from the below ss output

# ss -lp | egrep -i rspamd

LISTEN 0 128 :::11332 :::* users:(("rspamd",7469,10),("rspamd",7471,10),("rspamd",7472,10),("rspamd",7473,10))

LISTEN 0 128 *:11332 *:* users:(("rspamd",7469,9),("rspamd",7471,9),("rspamd",7472,9),("rspamd",7473,9))

LISTEN 0 128 :::11333 :::* users:(("rspamd",7469,18),("rspamd",7473,18))

LISTEN 0 128 *:11333 *:* users:(("rspamd",7469,16),("rspamd",7473,16))

LISTEN 0 128 ::1:11334 :::* users:(("rspamd",7469,14),("rspamd",7472,14),("rspamd",7473,14))

LISTEN 0 128 127.0.0.1:11334 *:* users:(("rspamd",7469,12),("rspamd",7472,12),("rspamd",7473,12))

127.0.0.1:11334

So if you want to change that (dont) you have to edit the rspamd.conf (core file):

# vim +/11334 /etc/rspamd/rspamd.conf

and change this line:

bind_socket = "localhost:11334";

to something like this:

bind_socket = "YOUR_SERVER_IP:11334";

or use sed:

# sed -i -e 's/localhost:11334/YOUR_SERVER_IP/' /etc/rspamd/rspamd.conf

and then fire up your browser:

![rspamd_worker.png]()

Web Password

It is a good tactic to change the default password of this web-gui to something else.

# vim /etc/rspamd/worker-controller.inc

# password = "q1";

password = "password";

always a good idea to restart rspamd.

Reverse Proxy

I dont like having exposed any web app without SSL or basic authentication, so I shall put rspamd web server under a reverse proxy (apache).

So on httpd-2.2 the configuration is something like this:

ProxyPreserveHost On

<Location /rspamd>

AuthName "Rspamd Access"

AuthType Basic

AuthUserFile /etc/httpd/rspamd_htpasswd

Require valid-user

ProxyPass http://127.0.0.1:11334

ProxyPassReverse http://127.0.0.1:11334

Order allow,deny

Allow from all

</Location>

Http Basic Authentication

You need to create the file that is going to be used to store usernames and password for basic authentication:

# htpasswd -csb /etc/httpd/rspamd_htpasswd rspamd rspamd_passwd

Adding password for user rspamd

restart your apache instance.

bind_socket

Of course for this to work, we need to change the bind socket on rspamd.conf

Dont forget this ;)

bind_socket = "127.0.0.1:11334";

Selinux

If there is a problem with selinux, then:

# setsebool -P httpd_can_network_connect=1

or

# setsebool httpd_can_network_connect_db on

Errors ?

If you see an error like this:

IO write error

when running rspamd, then you need explicit tell rspamd to use:

rspamc -h 127.0.0.1:11334

To prevent any future errors, I’ve created a shell wrapper:

/usr/local/bin/rspamc

#!/bin/sh

/usr/bin/rspamc -h 127.0.0.1:11334 $*

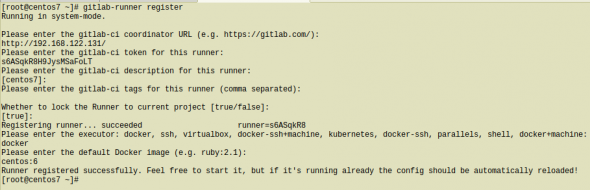

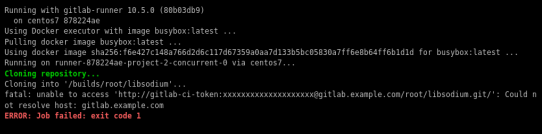

Final Thoughts

I am using rspamd for a while know and I am pretty happy with it.

I’ve setup a spamtrap email address to feed my spam folder and let the cron script to train rspamd.

So after a thousand emails:

![rspamd1k.jpg]()